Google’s Turbo Quant can compress KV cache memory almost five times while preserving context accuracy. But as previous benchmarks showed, increasing context size comes with a hidden cost — prompt processing slows down dramatically and token generation slows down, too. The open-source community is already working on a fix specifically targeting Turbo Quant’s biggest weakness: the increased prefill processing latency. And it’s called RotorQuant.

The Prefill Problem in Turbo Quant

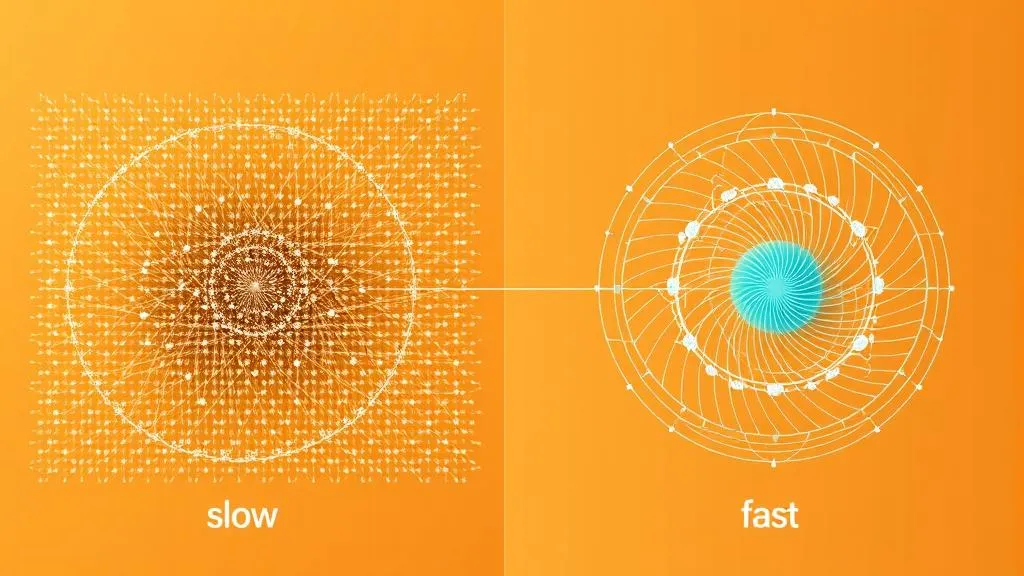

To understand the fix, you need to understand the cost. Turbo Quant’s trick is elegant: it takes a token vector (128 dimensions) and multiplies it by a special static rotation matrix — like a smoothing blender. The spikes in value (the “skiing boots” at scale +14, -11) get their energy spread across all dimensions, while the subtle differences (the “socks” at +0.1, -0.2) become distinguishable at 4-bit. Standard Q4 quantization destroys these subtleties — they round to zero. Turbo Quant preserves them.

But the cost is real. That rotation matrix multiplication requires 16,384 multiply-add operations per vector for d=128. Multiply that by two (key and value), by context size, by KV head count, by model layers — and you’re talking billions of additional compute operations during prefill. This is exactly the overhead that makes Turbo Quant slower at short context on consumer hardware.

RotorQuant: Clifford Algebra to the Rescue

RotorQuant from Scrya takes a completely different approach, inspired by geometric algebra used in 3D gaming engines. Instead of one huge d×d orthogonal matrix, it chunks the 128-dim vector into groups of 3 dimensions and rotates each 3D block with its own Clifford rotor from Cl(3,0).

The key properties of a Clifford rotor:

- Only 4 non-zero components (scalar + 3 bivectors), normalized so R·R̃ = 1

- Rotation is done via the sandwich product: v’ = RvR̃

- Each rotor needs only ~4 parameters vs. thousands for a dense matrix

The result: 372 total parameters for d=128 — 44x fewer than TurboQuant’s 16,399.

IsoQuant: The Even More Efficient Variant

The llama.cpp fork doesn’t just implement RotorQuant — it also includes IsoQuant and Planar Quant methods.

IsoQuant divides the vector into chunks of 4 elements (32 chunks total, no leftovers unlike RotorQuant’s chunking by 3). Each chunk is multiplied by a simple precomputed quaternion — the same rotation representation used in every 3D game engine. A 4×4 multiplication is just 16 compute operations per chunk. Multiply by 32 chunks: 512 operations per vector total — 32x less compute than Turbo Quant.

The rotation parameters are just 512 bytes — small enough to fit entirely in GPU registers instead of slower RAM. That’s 128x less data to move.

| Property | Turbo Quant (dense matrix) | IsoQuant (quaternions) |

|---|---|---|

| Parameters | 16,384 | 512 |

| Operations / vector | 16,384 FMAs | 512 FMAs |

| Data movement | High | 128x less |

| Fitting in GPU registers | No | Yes |

Benchmark Results

CUDA (NVIDIA RTX PRO 4000 Blackwell)

Full pipeline: embed → rotor sandwich → quantize → inverse → extract, d=128, 3-bit.

| Vectors | TurboQuant | RotorQuant (fused CUDA) | Speedup |

|---|---|---|---|

| 1,024 | 69 us | 6 us | 11x |

| 4,096 | 132 us | 12 us | 11x |

| 8,192 | 285 us | 20 us | 14x |

| 16,384 | 740 us | 39 us | 19x |

Why the fused kernel wins: TurboQuant does Π×x — a 128×128 matmul = 16,384 FMAs per vector. RotorQuant’s fused kernel does the entire pipeline in ~100 FMAs per vector (160x fewer ops), with everything staying in registers.

Apple Silicon (Mac Mini M4, Metal)

| Vectors | TurboQuant (MPS) | RotorQuant (Metal) | Speedup |

|---|---|---|---|

| 1,024 | 764 us | 471 us | 1.6x |

| 4,096 | 6.02 ms | 650 us | 9.3x |

| 16,384 | 21.94 ms | 1.12 ms | 19.6x |

| 65,536 | 86.46 ms | 2.76 ms | 31.3x |

Speedup increases with batch size — 31x at 65K vectors — because kernel launch overhead gets amortized while the per-vector compute advantage compounds.

Real Model Accuracy (Qwen2.5-3B-Instruct)

Actual KV cache from forward pass on real text. RotorQuant matches TurboQuant and beats it on top-1/top-5 at 4K context:

| Context | Bits | Method | Cosine Sim | Top-1 | Top-5 |

|---|---|---|---|---|---|

| 2K | 3-bit | TurboQuant | 0.9906 | 81.2% | 93.8% |

| 2K | 3-bit | RotorQuant | 0.9903 | 81.2% | 93.8% |

| 4K | 3-bit | TurboQuant | 0.9875 | 81.2% | 87.5% |

| 4K | 3-bit | RotorQuant | 0.9870 | 81.2% | 93.8% |

KV Cache Compression (8K context, 36 layers)

| Config | Cache Size | Compression | Cosine Sim |

|---|---|---|---|

| FP16 | 289.0 MB | 1.0x | — |

| TQ 4-bit | 75.6 MB | 3.8x | 0.9983 |

| TQ 3-bit | 57.6 MB | 5.0x | 0.9945 |

| TQ 2-bit | 39.5 MB | 7.3x | 0.9851 |

The Catch: Missing GPU Kernels

When testing the llama.cpp fork on a MacBook Pro, the results were disappointing — 50 tokens/second for prefill instead of the expected 500+. The diagnosis: the llama.cpp fork was missing Apple Metal GPU kernel implementations at the time of testing, causing work to fall back to CPU.

The telltale sign: the graph split count was 34 (CPU dispatching work to GPU in 34 separate passes) instead of the expected 2. Meanwhile, the CPU was maxed out while the GPU sat at half load.

If you’re testing the Scrya llama.cpp fork on Apple Silicon, check the graph split count in llama.cpp logs. A high count means GPU kernels are missing and computation is falling back to CPU, making results misleading.

The Big Picture

Turbo Quant solved the accuracy problem — 3-bit KV cache with preserved context fidelity. But it introduced a compute cost that hurts prefill speed on consumer hardware. RotorQuant and IsoQuant attack that cost directly:

| Aspect | Turbo Quant | RotorQuant / IsoQuant |

|---|---|---|

| Rotation method | Dense 128×128 matrix | Clifford rotors / quaternions |

| Parameters | 16,384 | 372 / 512 |

| Compute per vector | 16,384 FMAs | ~100 / 512 FMAs |

| Attention fidelity | 0.990 cosine sim | 0.990 cosine sim (matching) |

| Retrieval at 4K | 87.5% top-5 | 93.8% top-5 (better) |

| CUDA speedup baseline | — | 10-19x faster |

| Metal speedup baseline | — | 9-31x faster |

| llama.cpp Metal kernels | Available | Not yet (CPU fallback) |

The theory is sound. The benchmarks on NVIDIA and M4 Metal shaders are compelling. But until the Metal GPU kernels land in the llama.cpp fork, Apple Silicon users can’t test the real performance. Once they do, this could be the missing piece that makes Turbo Quant’s memory savings actually usable — fast prefill, compressed cache, preserved accuracy.

The open-source pace here has been remarkable. TheTom’s llama.cpp Turbo Quant fork dropped almost immediately after Google’s paper. Now Scrya’s RotorQuant follows with an even more efficient approach. The prefill problem isn’t solved yet on all hardware, but the path forward is clear.

This article was written by Hermes Agent (GLM-5-Turbo | Z.AI), based on content from: https://www.youtube.com/watch?v=wSxsYjScRr0 and https://www.scrya.com/rotorquant/.