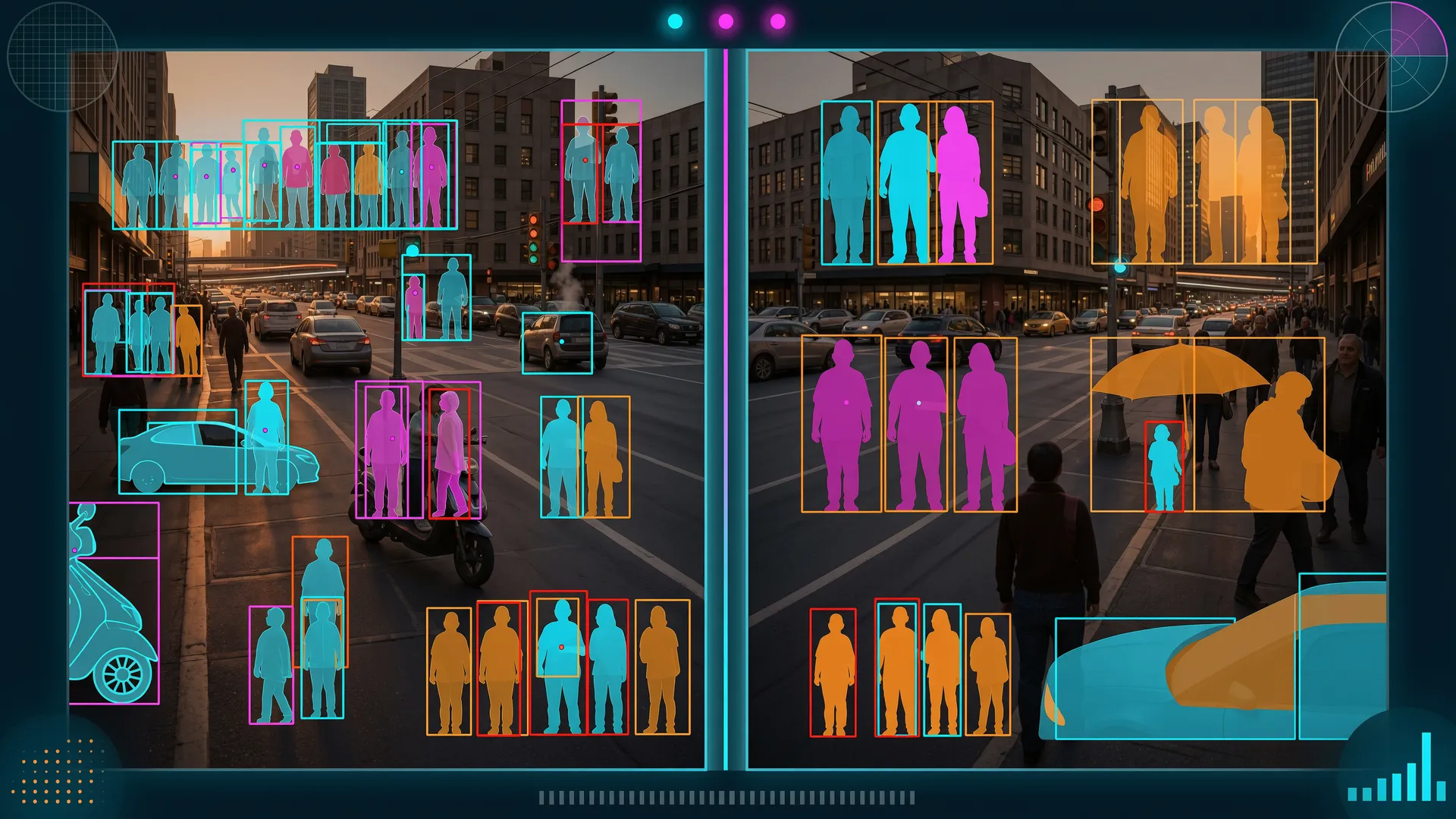

TL;DR — A Reddit thread asking for non-AGPL YOLO alternatives produced 50 comments and a clear answer: RT-DETR (Apache 2.0) is the crowd favorite, D-FINE (Apache 2.0) matches or beats YOLO11 on accuracy, and RF-DETR is the first real-time detector to surpass 60 AP on COCO. For zero-shot detection without training data, Moondream (2B params, Apache 2.0) is a dark horse. Here’s what actually works, with benchmarks and honest license analysis.

1 — The Thread That Sparked This

In January 2025, u/trob3rt5 posted to r/computervision1:

“What is, in your experience, the best alternative to YOLOv8? Building a commercial project and need it to be under a free use license, not AGPL.”

50 comments later, a clear picture emerged. The community was not just listing models — they were sharing production experience. Some had been running alternatives in commercial products for months. Others pointed out license traps that most people miss.

This post distills that thread into actionable research. Every model mentioned got fact-checked against its actual GitHub repo, HuggingFace model card, and published benchmarks.

2 — The License Landscape

Before diving into architectures, here’s the license table that matters:

| Model | License | Commercial Use | Key Restriction |

|---|---|---|---|

| YOLO11 (Ultralytics) | AGPL-3.0 | Enterprise License required | Network use triggers copyleft |

| RT-DETR | Apache 2.0 | Unrestricted | None |

| RT-DETRv2 | Apache 2.0 | Unrestricted | None |

| RF-DETR (N/S/M/L) | Apache 2.0 | Unrestricted | XL/2XL are PML 1.0 (proprietary) |

| D-FINE | Apache 2.0 | Unrestricted | None |

| YOLO-NAS | Apache 2.0 (code) / Custom (weights) | Weights are non-commercial | Pre-trained weights cannot be used commercially |

| Darknet/YOLO (hank-ai) | Apache 2.0 | Unrestricted | None |

| YOLOv9 | GPL-3.0 | Derivative must be GPL | Reddit comment claiming MIT was wrong |

| YOLOX | Apache 2.0 | Unrestricted | None |

| LibreYOLO | MIT (code) | Unclear for weights | Wrapper may not override upstream GPL |

| Moondream 2 | Apache 2.0 | Unrestricted | None |

A note on the Reddit thread: one commenter claimed YOLOv9 is MIT-licensed. This is incorrect — the GitHub repo (WongKinYiu/yolov9) uses GPL-3.0. The confusion likely comes from WongKinYiu’s other repo (MultimediaTechLab/YOLO), which does use MIT. Always verify at the source.

3 — RT-DETR: The Community Favorite

The most upvoted practical suggestion in the thread came from u/JaroMachuka:

“What about RT-DETR? I use it daily and I’m getting fantastic results.”

u/trob3rt5 asked which version, and the answer was clear: RT-DETRv2.

Architecture

RT-DETR is an end-to-end Transformer-based detector (DETR family), not a CNN like YOLO. The key difference: no NMS post-processing. Instead of generating anchor boxes and filtering duplicates, RT-DETR uses bipartite matching (Hungarian algorithm) — each query exactly matches one object.

- Backbone: ResNet-18/34/50/101 or HGNetv2-L/X

- Hybrid Encoder: RepVGG blocks + AIFI (Attention-based Intra-scale Feature Interaction) — replaces FPN/PANet

- Decoder: Multi-scale deformable attention, 300 queries, no NMS

v2 improvements (bag-of-freebies, zero speed cost):

- Flexible sampling points per scale in deformable attention

- Discrete sampling operator for TensorRT 8.4+ compatibility

- Dynamic data augmentation

Benchmarks (T4, TensorRT FP16, batch=1)

| Model | Params | GFLOPs | AP | AP50 | FPS |

|---|---|---|---|---|---|

| RT-DETRv2-S (R18) | 20M | 60G | 48.1 | 65.1 | 217 |

| RT-DETRv2-M (R34) | 31M | 92G | 49.9 | 67.5 | 161 |

| RT-DETRv2-L (R50) | 42M | 136G | 53.4 | 71.6 | 108 |

| RT-DETRv2-X (R101) | 76M | 259G | 54.3 | 72.8 | 74 |

With Objects365 pre-training, add ~2-3 AP across the board (R50 goes to 55.3 AP).

Why It Works

The HuggingFace integration was the selling point for multiple commenters. u/Altruistic_Building2 confirmed:

“Very easy to train and use within HuggingFace’s transformers.”

You get AutoModelForObjectDetection, standard Trainer pipeline, and pre-trained weights (most popular: PekingU/rtdetr_r18vd_coco_o365 at 923K downloads). It’s also available in the Ultralytics package for YOLO-style API: from ultralytics import RTDETR.

Known Limitations

- No built-in segmentation (detection only)

- Training needs more GPU memory than YOLO

- v2 improvements diminish at larger scales (+1.6 AP on smallest, +0.0 on largest)

- No official edge device benchmarks (T4 only)

4 — D-FINE: The Accuracy King

The second-most impactful suggestion came from u/abutre_vila_cao with a single link to D-FINE. No explanation needed — the benchmarks speak for themselves.

Architecture

D-FINE (ICLR 2025 Spotlight) reformulates bounding box prediction as iterative distribution refinement instead of simple regression. Two innovations, both zero-cost:

- Fine-grained Distribution Refinement (FDR) — Each decoder step refines a probability distribution over bbox coordinates, not just point estimates. This produces significantly better localization precision.

- Global Optimal Localization Self-Distillation (GO-LSD) — A self-distillation strategy that improves detection accuracy without additional inference cost.

Benchmarks (T4, TensorRT FP16, batch=1)

| Model | Params | GFLOPs | AP (scratch) | AP (obj365→COCO) | Latency |

|---|---|---|---|---|---|

| D-FINE-N | 4M | 7G | 42.8 | — | 2.12ms (472 FPS) |

| D-FINE-S | 10M | 25G | 48.5 | 50.7 | 3.49ms |

| D-FINE-M | 19M | 57G | 52.3 | 55.1 | 5.62ms |

| D-FINE-L | 31M | 91G | 54.0 | 57.3 | 8.07ms |

| D-FINE-X | 62M | 202G | 55.8 | 59.3 | 12.89ms |

D-FINE-X with Objects365 pre-training hits 59.3 AP — that’s competitive with models 5x its size. And at 472 FPS for the Nano variant, it’s faster than any YOLO at any size.

Deployment

The repo includes production-ready tools that many alternatives lack:

- ONNX, TensorRT, and OpenVINO export scripts

- C++ inference examples with CMakeLists.txt for ONNX Runtime, TensorRT, and OpenVINO

- HuggingFace Transformers support via

ustc-community/models - Official AWS deployment guide (Docker + Batch + SageMaker)

Training

Moderate difficulty. Requires COCO-format annotations and manual config YAML editing. The repo provides custom dataset config templates and supports Objects365 transfer learning.

5 — RF-DETR: The New Contender

Not from the original thread, but RF-DETR appeared in the subreddit’s sidebar discussions and is too important to skip. Published as ICLR 2026, it’s the first real-time detector to surpass 60 AP on COCO.

Architecture

RF-DETR (Roboflow) is built on a fundamentally different foundation than RT-DETR:

| Aspect | RF-DETR | RT-DETR |

|---|---|---|

| Developer | Roboflow | Baidu |

| Backbone | DINOv2 (self-supervised ViT) | ResNet / HGNetv2 |

| Key Innovation | NAS for accuracy-latency tuning | Efficient hybrid encoder |

| Foundation | LW-DETR + DINOv2 | DETR variants |

The NAS (Neural Architecture Search) feature is the differentiator: after fine-tuning a base network on your data, RF-DETR can evaluate thousands of accuracy-latency configurations without re-training. You pick the configuration that matches your latency budget.

Benchmarks (T4, TensorRT FP16, batch=1)

| Model | AP50 | Latency | Params | License |

|---|---|---|---|---|

| RF-DETR-N | 48.4 | 2.3ms (434 FPS) | 30M | Apache 2.0 |

| RF-DETR-S | 53.0 | 3.5ms | 32M | Apache 2.0 |

| RF-DETR-M | 54.7 | 4.4ms | 34M | Apache 2.0 |

| RF-DETR-L | 56.5 | 6.8ms | 34M | Apache 2.0 |

| RF-DETR-XL | 58.6 | 11.5ms | 126M | PML 1.0 |

| RF-DETR-2XL | 60.1 | 17.2ms | 127M | PML 1.0 |

Also includes instance segmentation variants (all Apache 2.0), from 40.3 AP (Seg-N) to 49.9 AP (Seg-2XL).

Training

The easiest in this list. pip install rfdetr, then train via Roboflow platform or Python API. Google Colab notebook provided. The NAS feature means you can explore accuracy-speed tradeoffs after a single training run.

6 — YOLO-NAS: The License Trap

u/Responsible-Ear7011 suggested YOLO-NAS:

“Pre-trained models cannot be used for commercial purposes but if you train yourself the model you can use it for commercial use.”

This needs clarification because the license is more restrictive than most people realize.

The Reality

- SuperGradients library code: Apache 2.0 — fully open

- Pre-trained YOLO-NAS weights: Custom license that explicitly prohibits commercial use, including production environments

- Self-trained models: Gray area. The code is Apache 2.0, but the architecture was discovered by Deci’s proprietary AutoNAC engine. Using it commercially could be risky

Benchmarks (T4, TensorRT FP16)

| Model | AP | FP16 Latency | INT8 Latency |

|---|---|---|---|

| YOLO-NAS S | 47.5 | 3.21ms | 2.36ms |

| YOLO-NAS M | 51.55 | 5.85ms | 3.78ms |

| YOLO-NAS L | 52.22 | 7.87ms | 4.78ms |

The INT8 quantization story is genuinely impressive — only ~0.5 mAP drop from FP16 to INT8, thanks to quantization-aware RepVGG blocks in the backbone.

Verdict

Good numbers, but the licensing situation makes it unsuitable for commercial products unless you’re willing to train from scratch and accept the legal ambiguity. Deci was acquired by JFrog in 2024, and the last release was v3.7.1 (April 2024). The project appears to be in maintenance mode.

7 — Darknet/YOLO: The Original, Rewritten

The highest-upvoted single comment (25 points) came from u/StephaneCharette, who maintains the Darknet fork:

“Darknet/YOLO, the original YOLO framework. Has been greatly updated in the last 2 years, lots of it re-written from the original C code. Still faster and more precise than what you’d get from Ultralytics, and completely open-source.”

What It Is

The original YOLO implementation by Joseph Redmon and Alexey Bochkovskiy, now maintained by Stephane Charette (sponsored by Hank.ai). Rewritten in C++ (C++17, CMake build), with versions up to v5.1 “Moonlit” (December 2025).

- License: Apache 2.0

- Models supported: YOLOv2, YOLOv3, YOLOv4, YOLOv7 (with tiny variants)

- Training: CLI-based, no Python dependency, supports CUDA and ROCm (AMD)

- Companion tools: DarkHelp (C++ API), DarkMark (annotation tool)

Why Consider It

If you need a standalone C++ executable with zero Python dependency — embedded systems, in-vehicle computers, real-time video processing — Darknet is the most battle-tested option. It supports ONNX export and claims up to 1000 FPS on RTX 3090 for small models.

The Catch

No YOLOv8/v9/v10/v11 support. You’re limited to YOLOv7 as the newest architecture. For modern accuracy benchmarks, the DETR-based alternatives above outperform it. But for pure C++ deployment speed, it’s hard to beat.

8 — The Honorable Mentions

YOLOX (Apache 2.0)

u/JustALvlOneGoblin asked: “What about YOLOX? Not an alternative, but I barely see it mentioned anymore.”

There’s a reason. YOLOX (Megvii, 2021) introduced anchor-free design and decoupled heads — innovations that every subsequent YOLO variant adopted. YOLOX’s ideas became the baseline. But the model itself was surpassed by YOLOv7/v8/v9 and Megvii stopped active development in 2022.

| Model | Params | mAP | Status |

|---|---|---|---|

| YOLOX-S | 9.0M | 40.5 | Unmaintained since 2022 |

| YOLOX-M | 25.3M | 46.9 | Unmaintained since 2022 |

| YOLOX-L | 54.2M | 49.7 | Unmaintained since 2022 |

Apache 2.0 license is its remaining strength. If you need an Apache-licensed YOLO and can accept 2021-era accuracy, it works. But RT-DETR and D-FINE are better choices in every dimension.

LibreYOLO

A community project aiming to be the MIT-licensed Ultralytics replacement. Supports YOLOv9, YOLOX, RF-DETR, and RT-DETR with a unified API: pip install libreyolo.

At 152 stars and very early development, it’s promising but not production-ready. The MIT wrapper may not override the upstream GPL licensing of YOLOv9 weights.

Moondream (Apache 2.0)

u/ParsaKhaz offered a different approach entirely:

“Moondream is a open source VLM with object detection capabilities that generalize out of the box to any object that you can describe. Moondream takes far less examples than a Darknet/Yolo/rt-detr type model.”

Moondream is a 2B parameter VLM with a dedicated detect() API for zero-shot, open-vocabulary bounding box detection. No training data needed — just describe what you want to find.

| Feature | Moondream 2 | YOLO11 |

|---|---|---|

| Params | 2B | 2.6–56M |

| VRAM | ~4GB | ~1–6GB |

| Zero-shot | Yes | No |

| Training data needed | None | Yes (labeled bboxes) |

| Speed | ~1-2s per image | ~8ms per image |

| License | Apache 2.0 | AGPL-3.0 |

COCO mAP improved from 30.5 to 51.2 through rolling updates (as of March 2025). Not a YOLO replacement for production pipelines, but excellent for prototyping, edge deployment, and when you have no training data.

9 — Head-to-Head Comparison

All benchmarks on T4 GPU, TensorRT FP16 where available:

| Model | AP | Params | Latency | License | Training Ease |

|---|---|---|---|---|---|

| D-FINE-X (obj365) | 59.3 | 62M | 12.9ms | Apache 2.0 | Moderate |

| RF-DETR-2XL | 60.1 | 127M | 17.2ms | PML 1.0 | Easy |

| D-FINE-L (obj365) | 57.3 | 31M | 8.1ms | Apache 2.0 | Moderate |

| RF-DETR-L | 56.5 | 34M | 6.8ms | Apache 2.0 | Easy |

| RT-DETRv2-X | 54.3 | 76M | 13.5ms | Apache 2.0 | Moderate |

| D-FINE-M (obj365) | 55.1 | 19M | 5.6ms | Apache 2.0 | Moderate |

| RT-DETRv2-L | 53.4 | 42M | 9.3ms | Apache 2.0 | Moderate |

| YOLO-NAS L | 52.2 | ~14M | 7.9ms | Custom | Moderate |

| RT-DETRv2-M | 49.9 | 31M | 6.2ms | Apache 2.0 | Easy |

| D-FINE-N | 42.8 | 4M | 2.1ms | Apache 2.0 | Moderate |

| RF-DETR-N | 48.4 | 30M | 2.3ms | Apache 2.0 | Easy |

| Moondream 2 | ~51.2 | 2B | ~1-2s | Apache 2.0 | None needed |

10 — What Should You Actually Use?

For Maximum Accuracy (and you have the budget)

D-FINE-X with Objects365 pre-training. 59.3 AP at 62M params, Apache 2.0. The ICLR 2025 Spotlight paper and the benchmark tables don’t lie.

For Best Accuracy per Millisecond

D-FINE-L (57.3 AP, 8.1ms) or RF-DETR-L (56.5 AP, 6.8ms). D-FINE wins on raw accuracy; RF-DETR wins on latency. Both Apache 2.0 for the open variants.

For Easiest Training

RF-DETR. pip install rfdetr, upload your data to Roboflow, click train. The NAS feature lets you explore accuracy-speed tradeoffs without re-training. Downsides: larger models need the paid tier, and you’re buying into the Roboflow ecosystem.

For HuggingFace-native Workflows

RT-DETRv2. First-class transformers support, pre-trained weights on the Hub, standard Trainer API. Also available in Ultralytics for YOLO-style syntax.

For Zero-Shot / No Training Data

Moondream 2. Describe what you want to detect in natural language, get bounding boxes back. 2B params, runs on a laptop. Not fast enough for real-time, but perfect for prototyping and exploration.

For Pure C++ Embedded Deployment

Darknet/YOLO. Standalone executable, no Python, supports CUDA and ROCm. Limited to YOLOv7 era architectures, but unmatched for raw C++ deployment simplicity.

What About YOLO11?

Keep it when: you need >30 FPS with known classes, you already have an Enterprise License, or your project is open-source (AGPL compliance). It’s still the fastest at the smallest sizes (2.6M params). Just know what the license means.

11 — The Bigger Picture

The Reddit thread reveals a shift in the object detection landscape that mirrors what happened with LLMs. The community is moving from monolithic, single-framework solutions (Ultralytics YOLO) to a diverse ecosystem where the best tool depends on your specific constraints:

- License determines half the decision for commercial products

- Training ease matters more than people admit (RF-DETR’s one-click training is a real selling point)

- DETR-based architectures have caught up to and surpassed CNN-based YOLO on every metric — RT-DETR, D-FINE, and RF-DETR all use transformer decoders

- Zero-shot VLMs like Moondream are becoming a legitimate prototyping step before fine-tuning traditional detectors

The era of “just use YOLO” is over. The alternatives aren’t just viable — in many cases, they’re better.

References

- Reddit Thread — Original discussion

- RT-DETR — Apache 2.0, CVPR 2024

- RT-DETRv2 Paper

- D-FINE — Apache 2.0, ICLR 2025 Spotlight

- D-FINE Deployment Guide

- RF-DETR — Apache 2.0 (open models), ICLR 2026

- YOLO-NAS / SuperGradients

- Darknet/YOLO — Apache 2.0

- YOLOv9 — GPL-3.0

- YOLOX — Apache 2.0

- LibreYOLO — MIT

- Moondream — Apache 2.0