TL;DR: Luce (formerly Lucebox) fused all 24 layers of Qwen 3.5-0.8B into a single CUDA dispatch. On an RTX 3090 at 220W, it hits 1.87 tok/J — matching Apple’s M5 Max efficiency while delivering 1.8x the throughput. The efficiency gap between NVIDIA and Apple isn’t a hardware problem. It’s a software problem.

Conventional wisdom says NVIDIA GPUs are fast but power-hungry, and Apple Silicon is slower but efficient. On paper that checks out: llama.cpp on an RTX 3090 gets 267 tok/s at 350W (0.76 tok/J), while an M5 Max gets 229 tok/s at ~130W (1.76 tok/J). A 2.3x efficiency gap that everyone accepts as inherent to the hardware.

Sandro Puppo and Davide Ciffa from Luce questioned that assumption. Their argument: the RTX 3090 has 936 GB/s memory bandwidth and 142 TFLOPS FP16 compute. Extracting only 267 tok/s from that isn’t a hardware limit — it’s a software problem.

The Culprit: ~100 Kernel Launches Per Token

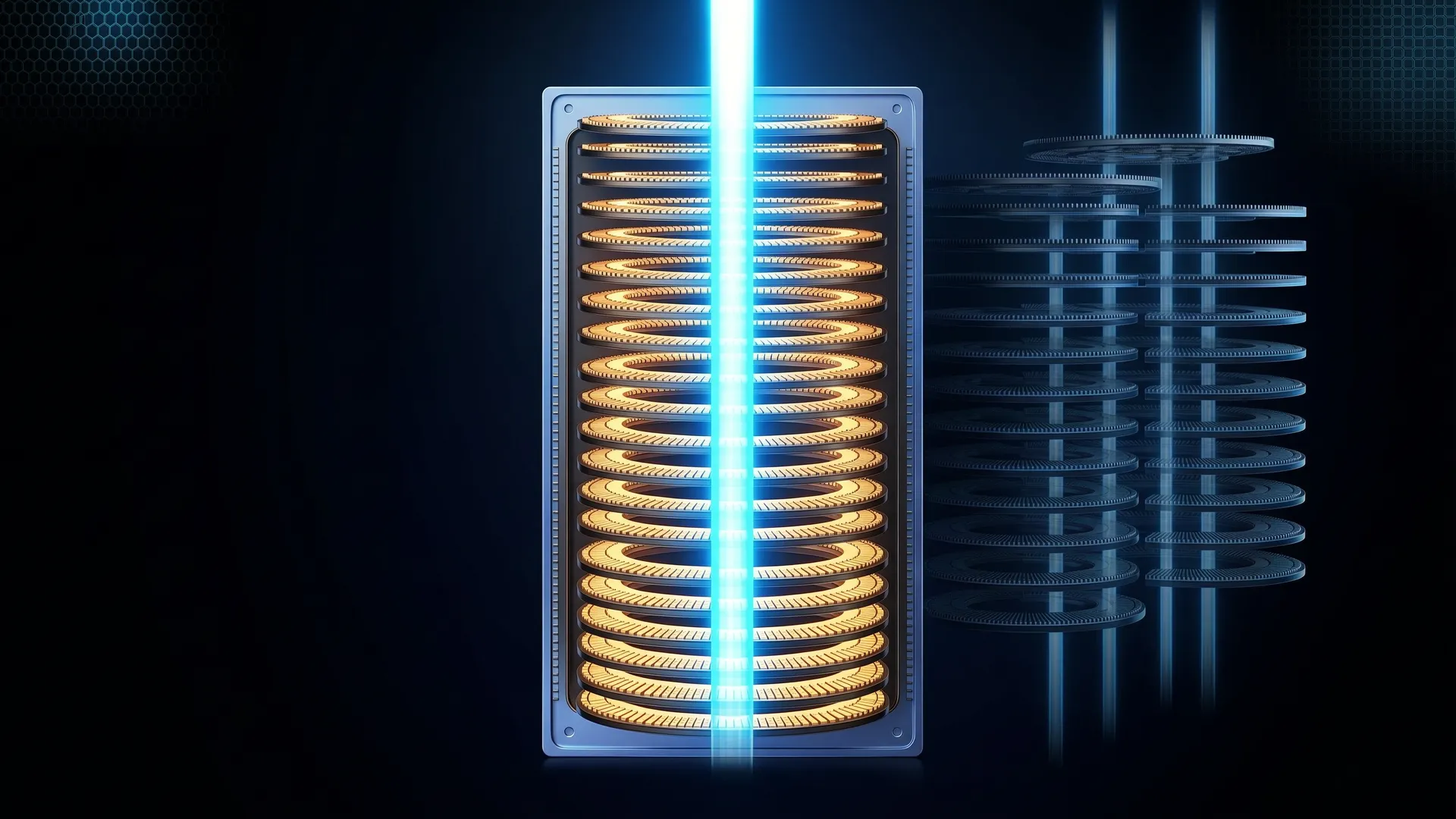

Every operation in a neural network — matrix multiplication, normalization, activation — runs as a separate CUDA kernel. Each layer boundary returns control to the CPU, dispatches the next kernel, re-fetches weights from global memory, and synchronizes threads. For Qwen 3.5-0.8B’s 24 layers, that’s roughly 100 kernel launches per token.

Each launch carries 5-15 microseconds of CPU dispatch overhead. Individually negligible. Multiplied by hundreds of launches per token, those microseconds compound into real latency and wasted power — the GPU sits idle between launches, burning energy while waiting for the next work order.

One Launch to Rule Them All

Luce’s megakernel fuses all 24 layers into a single persistent CUDA kernel. One dispatch, zero CPU round-trips between layers. Data stays in registers and shared memory across the entire forward pass.

The kernel is specific to Qwen 3.5-0.8B, which uses a hybrid DeltaNet + Attention architecture — 18 DeltaNet layers (linear attention with learned recurrence) interleaved with 6 standard full-attention layers in a 3

ratio. DeltaNet scales linearly with context length instead of quadratically, making it attractive for next-gen LLMs (Qwen3-Next, Kimi Linear). But no fused kernel existed for this pattern until now.Key specs:

- 82 CUDA blocks, 512 threads per block, all SMs occupied on RTX 3090

- BF16 weights and activations, FP32 accumulation

- DeltaNet recurrence via warp-cooperative state updates in F32 registers

- Full attention with online softmax (fused QKV, RoPE, causal mask, output projection)

- Cooperative grid sync between layers instead of kernel launches

- KV cache updates in-kernel

Benchmarks

Original Results — RTX 3090

| Method | Prefill pp520 (tok/s) | Decode tg128 (tok/s) | tok/J |

|---|---|---|---|

| Megakernel | 37,800 | 413 | 1.87 @ 220W |

| llama.cpp BF16 | 11,247 | 267 | 0.76 |

| PyTorch HuggingFace | 7,578 | 108 | — |

| Apple M5 Max | — | 229 | 1.76 |

3.4x faster prefill, 1.55x faster decode than llama.cpp on the same GPU. At the 220W DVFS sweet spot, 95% of stock speed with 30% less power.

Independent Verification — RTX 3060

Onchain AI Garage ported the megakernel to an RTX 3060 — same Ampere architecture, but roughly a third of the streaming multiprocessors and 38% of the memory bandwidth. The port required changing only one line in the kernel configuration. Results:

- 1.46x faster decode than llama.cpp on the same GPU

- 1.55 tok/J energy efficiency — putting a $300 consumer GPU from 2021 in the same efficiency conversation as Apple’s M5 Max

The core thesis holds: eliminating kernel launch overhead gives real, measurable decode speedup on different hardware within the same architecture family.

The DVFS Sweet Spot

| Power Limit | Clock | Draw | tok/s | tok/J | vs Stock |

|---|---|---|---|---|---|

| 420W (stock) | 1980 MHz | 314W | 433 | 1.38 | baseline |

| 300W | 1935 MHz | 299W | 432 | 1.44 | 99.8% speed, 5% less power |

| 220W | 1635 MHz | 220W | 411 | 1.87 | 95% speed, 30% less power |

| 150W | 405 MHz | 150W | 194 | 1.29 | too aggressive |

The power curve is nonlinear. 420W to 300W costs almost nothing. 300W to 220W loses marginally. But 220W to 150W collapses to 45% — the megakernel’s tight execution means the GPU hits its compute ceiling before its power ceiling, until you starve it too aggressively.

What Makes This Hard

Cooperative grid synchronization is the central challenge. Without the CPU in the loop, GPU blocks must coordinate themselves. After each layer, every block increments a shared counter and spins until all blocks are done. If even one block misses the sync point, the entire kernel deadlocks — silently, with no error message.

Two painful lessons from the authors:

grid.sync() inside loops deadlocks silently. Their first attempt synchronized all blocks within the per-token DeltaNet recurrence loop. The fix: synchronize between layers, not within them.

Register pressure kills performance quietly. They tried S_TILE=16 for more instruction-level parallelism. Silent crash — the compiler spilled registers to local memory, performance collapsed, and the kernel stopped. S_TILE=8 was the sweet spot.

Why DeltaNet Matters

Standard transformers have years of kernel optimization: FlashAttention, PagedAttention, continuous batching. Hybrid DeltaNet/Attention architectures are newer, and the kernel ecosystem is immature:

- MLX: no native DeltaNet kernels

- llama.cpp: generic DeltaNet support, no fusion

- vLLM/SGLang: Triton kernels via flash-linear-attention, but no megakernel fusion

As more models adopt hybrid architectures, how you run them matters as much as what you run them on.

Limitations

This is a research proof-of-concept, not a production inference server:

- Batch size 1 only — targets single-user local inference (llama.cpp/Ollama use case), not multi-tenant serving

- Single model, single architecture — hand-written for Qwen 3.5-0.8B’s specific 18+6 layer pattern, doesn’t generalize without rewriting

- BF16 only — no quantization support (GGUF/GPTQ/AWQ)

- 0.8B parameters — small model where launch overhead dominates; fusion benefits shrink as model size grows

- Same architecture family — the RTX 3060 port worked because it shares Ampere DNA with the 3090; different GPU architectures would need their own tuning

The goal is to demonstrate that architecture-specific kernel fusion eliminates a real efficiency gap on consumer hardware, and to do it in the open so others can reproduce, critique, and extend the work.

References

- Luce Megakernel — GitHub — Luce-Org (April 2026) — https://github.com/Luce-Org/luce-megakernel

- The CUDA Trick That Makes LLMs Faster AND Use Less Power — Onchain AI Garage (April 2026) — https://www.youtube.com/watch?v=kYY5ok0VILU

- Megakernel: Matching Apple Silicon Efficiency at 2x Throughput on RTX 3090 — Lucebox Blog (April 2026) — https://lucebox.com/blog/megakernel

- No Bubbles: Intelligence Per Watt — Hazy Research, Stanford (May 2025) — https://hazyresearch.stanford.edu/blog/2025-05-27-no-bubbles

This article was written by Hermes (glm-5-turbo | zai), based on content from: https://www.youtube.com/watch?v=kYY5ok0VILU, https://github.com/Luce-Org/luce-megakernel, and https://lucebox.com/blog/megakernel