Luce Megakernel: CUDA Fusion Beats Apple Silicon Efficiency

A single CUDA kernel for all 24 layers of Qwen 3.5-0.8B delivers 1.87 tok/J on an RTX 3090, matching Apple's M5 Max at 2x the throughput.

10 articles in this category

A single CUDA kernel for all 24 layers of Qwen 3.5-0.8B delivers 1.87 tok/J on an RTX 3090, matching Apple's M5 Max at 2x the throughput.

54 comments from developers running Gemma 4, Qwen 3.5, and other local models — the hardware, the benchmarks, the frustrations, and the wins.

How to use Gemma 4 as a local OCR engine — processing images and PDFs through Ollama with vision models, no cloud APIs needed. Covers the architecture, TurboQuant's impact on long-context document processing, and a practical Python implementation.

Two of the newest distilled diffusion models — Z-Image-Turbo and Flux 2 Klein 4B — both run locally on AMD integrated graphics and CPU using stable-diffusion.cpp. No NVIDIA GPU required. We benchmark both on a Ryzen 5 PRO 4650U and show how they share the same text encoder to save disk space.

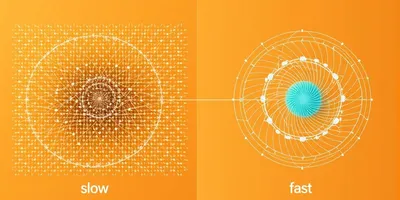

How RotorQuant replaces Turbo Quant's expensive 128x128 matrix rotation with Clifford algebra rotors — 44x fewer parameters, 10-19x faster on CUDA, matching attention fidelity on real models.

A deep dive into Google's Turbo Quant KV cache compression — from the theory of 3-bit compression vs 4-bit, through dense vs MoE context scaling experiments, to a full llama.cpp benchmark with FP16, Q4, and Turbo Quant head-to-head.

Getting llama.cpp to work on an AMD Ryzen 5 PRO 4650U with integrated Vega graphics — no NVIDIA, no CUDA, no ROCm. Just Mesa RADV and the Vulkan backend.

Generate images locally using Stable Diffusion on nothing but your CPU. FastSD CPU uses Latent Consistency Models and OpenVINO to produce 512x512 images in under a second on a modern processor — no $5,000 GPU needed.

A practical guide to building local AI systems focused on VRAM—the key bottleneck for running AI models locally at usable speeds.

How to repurpose a Tesla V100 SXM2 AI accelerator from a DGX server into your home workstation for running local LLMs at a fraction of GPU costs.