RAG's Evolution: From Simple Retrieval to Agentic AI

Information retrieval evolved through six stages — from keyword search to agentic RAG. Each stage solved a fundamental limitation of the previous one.

Andrej Karpathy's LLM Wiki concept — using an AI coding agent to read your documents once and build a structured, interlinked markdown knowledge base in Obsidian that compounds over time, replacing the search-from-scratch approach of traditional RAG.

Information retrieval evolved through six stages — from keyword search to agentic RAG. Each stage solved a fundamental limitation of the previous one.

CLI wins when commands map directly to jobs. MCP wins when there's an abstraction gap — JS-rendered pages, OAuth auth, per-user access control. The answer is to use both.

Deep technical dive into Hermes Agent's Kanban system — the SQLite-backed task board, dispatcher internals, worker lifecycle, structured handoff via task_runs, the 9 collaboration patterns, and production best practices for building reliable multi-agent pipelines.

Youri van Hofwegen's full course on AI animation: the SCENE planning framework (Story, Character, Emotion, Narrative beats, Every-clip rules), 3D world consistency via Open Art, character creation, multi-shot prompting with Seedance 2.0, and a 720p-to-2K upscaling trick that halves credit costs.

A technical deep dive into VRSEN OpenSwarm: how its orchestrator, specialist agents, handoff graph, Composio tools, terminal launcher, and forkable repo structure turn one prompt into multi-artifact workflows.

Build RAG systems that enforce who can see what by resolving permissions once, filtering inside the database, and combining vector + BM25 search for accuracy and security.

Step-by-step guide to building a fully local, air-gapped multimodal RAG system using IBM Docling for document extraction, n8n for orchestration, Ollama for LLM inference, and Qdrant as a vector store — all running in Docker with zero external API calls.

Replace RAG vector databases with a live-reading AI agent that crawls your website in real time using PocketFlow's 100-line Python framework, FastAPI WebSockets, and agentic coding.

Run the 80B MoE Qwen3-Next locally using llama.cpp with selective FFN layer offloading to CPU. Unsloth UD-Q4_K_XL quantization + regex-based -ot flag lets you maximize GPU usage while keeping MoE expert layers in system RAM.

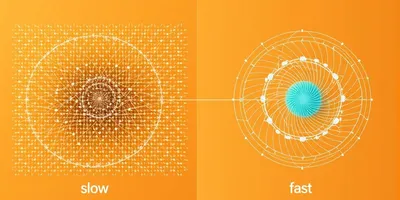

Run Qwen3.5-27B at 3.43x autoregressive speed on a single RTX 3090. Lucebox's DFlash port brings block-diffusion speculative decoding to GGUF — build, download weights, and start generating in under 20 minutes.

Google released official MTP drafter models for Gemma 4. A small companion model guesses tokens ahead, the big model verifies — same quality, nearly 3x speed on the same hardware.

Five working tricks + one failed trick + one upcoming trick for running Qwen 3.6 35B on an 8-year-old GTX 1060.

Step-by-step guide to building and training a 1.8M parameter GPT-2-style transformer from scratch on your laptop using PyTorch. Covers tokenization, model architecture, the training loop, and inference with temperature sampling.

Install and configure Poolside's pool CLI coding agent on Linux/macOS. Covers auth, ACP server/client modes, MCP integration, permissions, and what the EULA actually means for your code.

Indonesia imports 7.8 million tons of LPG yearly, mostly from the US, costing IDR 87 trillion in subsidies. Two very different strategies aim to fix this: CNG from domestic gas (deploying now) and DME from coal gasification (delayed to 2028, dubious economics). Here's how they differ, who the players are, and why CNG is winning.

Step-by-step guide to setting up Hermes Agent's Kanban task board — creating specialist profiles, configuring API keys, wiring task dependency graphs, and avoiding common pitfalls that cause silent failures and lost output.

A comprehensive survey of non-NVIDIA AI chips available today — TPUs, NPUs, custom ASICs, and wafer-scale engines — from AWS Trainium3 to Cerebras WSE-3, Google TPU v6 Trillium, Korea's NPU startups, and optical interconnect upstarts.

Microsoft's exclusive grip on OpenAI is over. How the $650M Suleyman hire, a $5B annual loss, an undefined AGI clause, and Anthropic's Bedrock advantage led to the biggest AI partnership rewrite in years.

How ABC's 7:30 segment on EV charging used manufactured negativity — charging to 100%, ignoring home charging, and disabling comments — to frame electric vehicles as impractical.

A hands-on technical tutorial on Pi, the minimal open-source coding agent. Based on the free course by Owain Lewis.

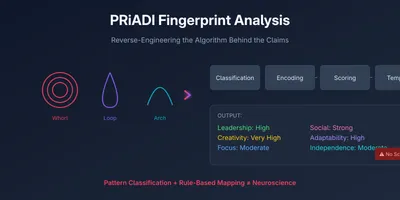

A technical breakdown of how fingerprint-based personality systems likely work under the hood, and how they differ from real neuroscience.

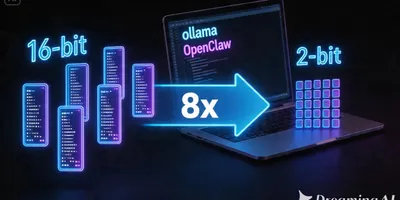

Turbovec achieves 8x memory compression for RAG embeddings via TurboQuant quantization, enabling fully local agentic workflows with OpenClaw and Ollama on consumer hardware.

Learn how to combine Pi's minimal coding agent with Archon's harness builder and Plannotator's plan-gating system to create reproducible AI development workflows.

A precise V60 Japanese iced recipe for Flores natural beans, tuned for nutty, chocolate-forward profiles using Timemore C3.

A practical walkthrough of using structured synthetic data, Unsloth fine-tuning, and a simple harness to turn a tiny base model into a fast local specialist.

How cocoindex-code uses Tree-sitter chunking and incremental re-indexing to give AI coding agents whole-repo context with 70% fewer tokens.

How the 2016 World Brewers Cup champion turned a simple ratio into a repeatable, tunable V60 pour-over method — and why it still matters a decade later.

How Hermes Agent routes eight background tasks through auxiliary models, why compression dominates spend, and how per-task model selection can cut token costs sharply.

The real reasons classic-era recordings sound better than modern music — industrial-grade gear, live performance, imperfect tuning, and high-stakes motivation.

How Indonesia's first-to-file trademark system enables brand hijacking, the legal mechanisms that should stop it but often don't, and why the BYD Denza case is just the latest symptom of a systemic problem.

The full timeline of how PT Worcas Nusantara Abadi registered the Denza trademark 13 months before BYD, transferred it during litigation, and forced BYD to rebrand as Danza in Indonesia.

How I evaluated 8 memory providers for the Hermes coding agent across two elimination rounds, discovered a broken config, and chose Lucid for persistent project-aware memory.

When a developer asked r/computervision for YOLO alternatives with permissive licenses, the community delivered. Here's what actually works: RT-DETR, D-FINE, RF-DETR, YOLO-NAS, and a few surprises.

A practical comparison of YOLO11 against SAM 3, Florence-2, GroundingDINO, and YOLO-World — covering architecture, performance, and the licensing trap that caught many off guard.

A deep dive into the 4-tier memory consolidation model and triple-stream retrieval system that makes agentmemory the most sophisticated memory system for AI agents.

How to leverage pre-trained knowledge, directory structures, and programmatic systems to influence AI agent behavior without adding token overhead.

How Iran, the US, and Israel are competing in the Great Meme War of 2026 — and why the underdog with AI Lego videos is winning the information battle.

A single CUDA kernel for all 24 layers of Qwen 3.5-0.8B delivers 1.87 tok/J on an RTX 3090, matching Apple's M5 Max at 2x the throughput.

Alibaba's Qwen Code terminated its free OAuth tier on April 15, 2026. Here's the full timeline, what changed, why it matters, and your alternatives.

A deep dive into LiveKit — what it is, how to self-host it, what it costs, and how it compares to Mediasoup, Jitsi, and commercial alternatives.

54 comments from developers running Gemma 4, Qwen 3.5, and other local models — the hardware, the benchmarks, the frustrations, and the wins.

How the AI race plays out across hardware, models, and data — and why China's structural advantages in multimodal data could reshape the industry.

How a 73% drop in extended thinking tokens turned a 191K-line/weekend multi-agent fleet into a supervised single-session workflow — and what the data tells us about why thinking budgets matter.

Brad Bonanno reveals that hitting Claude Code usage limits isn't a quota problem — it's a context hygiene problem. By auditing MCP servers, trimming CLAUDE.md bloat, replacing MCPs with CLIs, and using plan mode strategically, you can cut invisible context waste and use Claude Code all day without burning tokens.

Context7 started as an MCP server for fetching up-to-date library docs into AI coding agents. Now at v0.3.12, it bundles a CLI, a skills marketplace, a REST API, and a setup wizard — making it a one-stop solution for keeping AI assistants informed about the libraries they write code against.

Andrej Karpathy's LLM Wiki pattern — a persistent, compounding knowledge base maintained by AI — hit 5,000+ stars in days. Here's the full architecture, what the community discovered, and the structural gaps that could make it collapse.

Corridor Crew's Niko Pueringer released CorridorKey, an open-source neural network that automates green screen keying for semi-transparent elements like hair, smoke, and motion blur.

A complete inventory of all 118 Hermes Agent skills installed on my machine — 77 bundled from the Skills Hub and 41 locally created — with descriptions of what each one does, organized by category.

Auto-CoT (Zhang et al., 2022) automatically constructs chain-of-thought demonstrations by clustering questions for diversity and generating reasoning chains with zero-shot CoT. Matches or exceeds hand-crafted Manual-CoT across ten benchmark reasoning tasks with GPT-3.

Mixture of Experts models like Qwen3 Coder, Kimi K2.5, and Gemma 4 are blazing fast locally, but one-shot prompts make them fall apart. Here's why the MoE router is the culprit and how incremental construction turns them into reliable tools.

Ed Zinda breaks down what agent loops actually are, how harnesses wrap around them, when to build your own, and introduces Kit — a Go-based coding agent harness inspired by Pi's minimal design philosophy.

How to connect Hermes Agent to Zed editor via ACP (Agent Communication Protocol) — giving you full terminal agent capabilities (tools, skills, memory, web) directly in Zed's assistant panel alongside Claude Code, OpenCode, and Qwen Code.

An introduction to harness engineering and Archon, the open-source harness builder for building reliable AI coding agents.

Anthropic announced Claude Mythos Preview, a frontier model that can autonomously find and exploit zero-day vulnerabilities in every major OS and browser. Thousands of critical bugs found, some decades old. Here's what it means for cybersecurity.

Three dialed V60 recipes for Mandheling full wash: classic Japanese iced, sweetness-focused variation, and iced milk using concentrate + bypass. Optimized for Timemore C3.

Systematic investigation of a 13-second delay on every bun run dev restart, tracing through Vite middleware, Astro SSR, and CSS compilation to find the root cause.

How to use Gemma 4 as a local OCR engine — processing images and PDFs through Ollama with vision models, no cloud APIs needed. Covers the architecture, TurboQuant's impact on long-context document processing, and a practical Python implementation.

How I installed and configured Pi Agent — a terminal-based AI coding agent that supports dozens of LLM providers, custom extensions, and shared skills across agents like Claude Code and Codex.

Deep dive into Swival — a pure Python CLI coding agent by jedisct1 that handles tight context windows, graduated compaction, and multi-provider support. Includes practical setup guide and comparison with Hermes Agent.

Two of the newest distilled diffusion models — Z-Image-Turbo and Flux 2 Klein 4B — both run locally on AMD integrated graphics and CPU using stable-diffusion.cpp. No NVIDIA GPU required. We benchmark both on a Ryzen 5 PRO 4650U and show how they share the same text encoder to save disk space.

How RotorQuant replaces Turbo Quant's expensive 128x128 matrix rotation with Clifford algebra rotors — 44x fewer parameters, 10-19x faster on CUDA, matching attention fidelity on real models.

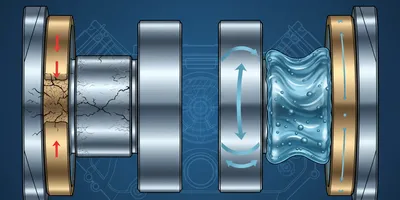

A deep dive into Google's Turbo Quant KV cache compression — from the theory of 3-bit compression vs 4-bit, through dense vs MoE context scaling experiments, to a full llama.cpp benchmark with FP16, Q4, and Turbo Quant head-to-head.

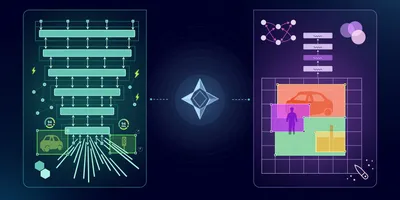

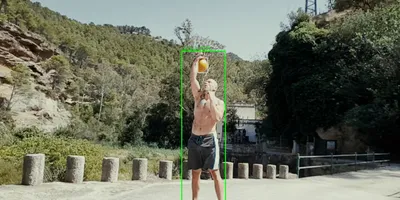

How I built a multi-stage video analysis pipeline that detects motion, classifies objects with YOLO, reads text with OCR, and describes scenes with a tiny VLM — all running on CPU with a web dashboard.

Getting llama.cpp to work on an AMD Ryzen 5 PRO 4650U with integrated Vega graphics — no NVIDIA, no CUDA, no ROCm. Just Mesa RADV and the Vulkan backend.

Generate images locally using Stable Diffusion on nothing but your CPU. FastSD CPU uses Latent Consistency Models and OpenVINO to produce 512x512 images in under a second on a modern processor — no $5,000 GPU needed.

You bought specialty Gayo wine beans, dialed in your grinder, followed a recipe — and got rubber. Here's a troubleshooting walkthrough for one of the most common problems with Japanese iced coffee, from grind size to pour structure to the beans themselves.

Climate change is quietly rewriting the flavour profile of Indonesia's most iconic crops. From the highlands of Java to the rice paddies of West Java, erratic weather is making coffee more bitter, chocolate less chocolatey, tea more astringent, and rice bland — and pushing prices higher.

A practical walkthrough of installing Hermes Agent by Nous Research — covering the installer script internals, PyTorch CPU optimization, Bun runtime compatibility, RL training vs. built-in learning, and setting up CLI skills for Tavily, Context7, and Beads.

How WorldView's open-source intelligence platform tracks the Iran-US-Israel conflict in real time — 92% Strait of Hormuz traffic drop, Iran's toll booth scheme, dark vessel patterns, and the escalating military strikes.

How OpenCode plugins work under the hood — hooking into tool execution, registering custom AI-callable tools, and controlling session compaction behavior.

A practical walkthrough of a Qwen Code configuration — with bkit's PDCA workflow engine, Context7 for live docs, context-mode protection, and 26 shared skills spanning Vue, Go, Tailwind, Tavily, and more.

A deep dive into the current state of GitHub Spec Kit — the primary maintainer left for Anthropic, PRs are piling up, the community is frustrated, and alternatives are emerging. Here's what's really going on.

How Beads replaces flat issue lists with a dependency-aware graph database, giving AI coding agents persistent memory, multi-agent coordination, and zero-conflict task tracking.

How the Strait of Hormuz — a narrow channel just 30 miles wide — controls roughly 15% of the world's energy supply and why its closure could crash the global economy.

A comprehensive analysis of the February-March 2026 war between the US-Israel coalition and Iran, covering military operations, the Strait of Hormuz blockade, escalation dynamics, and the uncertain path ahead.

A deep dive into bkit-gemini v2.0.0 — a Gemini CLI extension that adds 21 AI agents, 35 domain skills, and a 10-event hook system to enforce PDCA methodology and Context Engineering for AI-native software development.

Transform any user input into precision-crafted prompts using the 4-D Methodology for optimal AI performance across all platforms

A videograher in Karo was prosecuted for corruption because auditors valued his creative work at Rp 0. The case exposes a systemic failure in how Indonesia's government procurement treats intellectual labor — and the legal instruments that already exist to fix it.

A deep dive into GitNexus v1.4.10 — the graph-powered code intelligence tool for AI agents. Covers architecture, dependency analysis, Node.js version requirements, and a clean isolation setup using nvm.

A philosophy-first walkthrough of a power-user Opencode configuration — with Superpowers skill workflows at the core, supported by context protection, code intelligence, live docs, and vision.

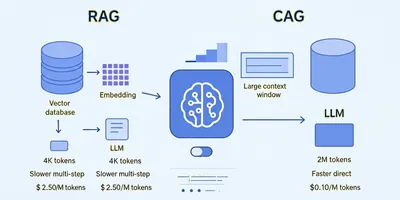

Understand RAG vs Long Context, decode the acronyms (CAG, KV Cache, RLMs), and learn how to build a local RAG agent with zero ongoing costs.

A practical guide to building local AI systems focused on VRAM—the key bottleneck for running AI models locally at usable speeds.

An analysis of Indonesia's fuel import policy shift, the government's energy sovereignty strategy, and the macroeconomic logic behind requiring private retailers to source domestically.

Learn how to use Astro 6's new Font API to load and optimize web fonts. Step-by-step tutorial covering configuration, providers, and CSS integration.

A developer's guide to building a Palantir-like system using open-source tools: Kafka for data ingestion, Spark for stream processing, Neo4j for knowledge graphs, and LLMs for autonomous agents.

An open-source autonomous agent with a built-in learning loop that creates skills from experience, improves them during use, and remembers across sessions. Unlike typical chatbots or coding copilots, Hermes runs on your server, integrates with messaging platforms, and gets smarter the longer you use it.

How to repurpose a Tesla V100 SXM2 AI accelerator from a DGX server into your home workstation for running local LLMs at a fraction of GPU costs.

How Context Mode virtualizes MCP tool outputs to reduce context consumption by 99%, extending your Claude Code sessions from 30 minutes to 3 hours.

Step-by-step guide to building a modern sticky navbar with glassmorphism effects using Tailwind CSS v4 and DaisyUI's navbar component.

Astro 6 replaces the dev server simulation layer with real runtime execution via Vite's Environment API. Technical breakdown of changes, breaking changes, and upgrade path.

Vite+ introduces a comprehensive toolchain solution combining runtime management, package handling, and frontend tooling into a single CLI. The alpha release brings monorepo support, intelligent caching, integrated linting, and seamless migration capabilities.

Despite having no features graduating to final status, JDK 26 delivers meaningful performance improvements for containerized workloads, including G1 garbage collector enhancements, HTTP/3 API, and continued progress on Project Loom and Valhalla.

Learn a practical framework for writing better prompts when building apps with AI tools like Lovable, Cursor, and Bolt. Improve code quality and avoid bug loops.

A comprehensive analysis of self-hosted Discord alternatives including Matrix, TeamSpeak, Rocket Chat, Zulip, Sto, Fluxer, and Mattermost amid Discord's privacy controversies.

A detailed overview of the 2020 disbandment of Hololive CN, analyzing internal rumors, protest allegations, and the current status of all six members.

The inside story of how Alibaba's most important AI team walked out in a single day, and what it means for the open-source community.

A practical guide to Garage, an open-source S3-compatible object storage solution that runs on modest hardware

Learn how to use grep with -A, -B, and -C options to capture lines before and after matching patterns

A deep comparison of Quarkus and Helidon as GraalVM-native Java stacks, covering licensing, drivers, OpenTelemetry, IDE and AI coding assistant support (MCP, LLM context), native-image build times, memory footprint, startup time, and a practical decision checklist for architects.

Comprehensive examination of private equity returns, healthcare industry effects, and the gap between marketing claims and actual performance

Examination of private equity leveraged buyouts, their effects on companies, workers, and the broader economy

Examination of private equity asset stripping through Red Lobster, Burger King, and Toys R Us case studies

Analysis of Redis's controversial license change and the emergence of major alternatives including Valkey, Garnet, and DragonflyDB as the open-source community searches for new homes.

An analysis of GM's L87 engine recall, the shift to 0W40 oil, and the tribology behind thin oils in modern engines.

Discover how Bank Indonesia developed QRIS during the pandemic, creating an efficient payment system that bypasses expensive infrastructure and enables direct local currency transactions.

Analysis of the Discord hack exposing government IDs through age verification systems and the implications for digital identity and online safety.

Explore how batch invariance issues cause non-determinism in large language models and the solutions to achieve reproducible results in AI systems.

Explore 11 key RAG strategies including re-ranking, agentic RAG, knowledge graphs, and contextual retrieval to enhance your AI agents' performance and accuracy.

An analysis of PewDiePie's controversial AI video, breaking down his takes on AI hardware, media generation, influencer culture, and the future of AGI from a developer's perspective.

Exploring Anthropic's analysis of MCP's token consumption issues and their proposed solution using agent skills for more efficient AI agents.

Explore TOON format's 30-60% token savings for LLM interactions and learn how to implement it with agentic coding workflows for optimized AI data processing.

A technical breakdown of Anthropic cutting off Trey’s Claude access: what happened, why it matters, and how data feedback loops, open-weight models, and geopolitics shape this fight.

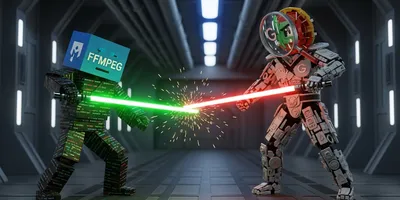

A detailed timeline analysis of the dispute between FFmpeg maintainers and Google security researchers over AI-generated vulnerability reports and volunteer burden

An in-depth look at the key players in China's rapidly evolving AI landscape, from open-source champions to secretive tech giants.

A chronological overview of AMD's major open-source software/firmware initiatives and the strategic reasons behind the move.

An in-depth look at Singpass, Singapore's revolutionary digital identity system that has transformed citizen-government interactions through secure, convenient online authentication.

A comprehensive technical and legal analysis of the EU's eIDAS Regulation, covering electronic identification, trust services, and cross-border digital authentication standards.

A comprehensive analysis of China's National Network Identity Authentication Public Service within the framework of Cybersecurity Law, Data Security Law, and Personal Information Protection Law.

Comprehensive analysis of France's digital identity evolution - examining FranceConnect, legal frameworks, technical architecture, and the strategic vision for a sovereign, privacy-centric digital identity system in the EU context.

A comprehensive analysis of digital identity systems — comparing government-managed vs private/sector-managed models, single vs multiple identity providers, and global country case-studies with lessons for implementation.

A comprehensive comparison of digital identity systems across 57 countries worldwide, examining models, strengths, and lessons learned.

Updated analysis exploring Trump's tariff policies as a mix of multiple advisor factions, including Industrialists, Techno-nationalists, Dynamists, and Trade Warriors, aiming to reorient international economic relations.

Japan signs its largest defense contract since WWII with Australia, marking a return to arms exports and reshaping Indo-Pacific security dynamics.

An analysis of why Germany is ramping up defence spending and how U.S. and NATO pressure contributed.

A comprehensive Q&A addressing common Western misconceptions about China, covering its history, economy, politics, and global ambitions.

Master BMAD Methods to decompose complex software projects into feasible, incremental development tasks. A comprehensive guide to effective project planning, prioritization, and execution.

Analysis of the ongoing debate between Minister of Finance Purbaya and regional governors about parked regional budget funds, revealing systemic reasons for slow APBD absorption.

Build a context-driven agent architecture that prevents context overload and improves AI development workflow efficiency

Comprehensive analysis of Indonesia's ambitious DME strategy - from coal gasification initiatives to reducing LPG import dependency, featuring Presidential Regulation 109/2020 and the path to energy security by 2030.

Comprehensive analysis of Bahlil Lahadalia's journey from Papua's streets to Indonesia's energy leadership - examining his business empire, political career, and the intersection of entrepreneurship and governance.

A comprehensive analysis of US-Indonesia trade relations from 2016-2025, covering tariff negotiations, reciprocal trade agreements, and the impact of geopolitical shifts on bilateral economic partnerships.

Deep dive into Indonesia's government fund accumulation crisis - Rp285.6T in deposits, Rp357.4T in current accounts, and the ongoing controversy between Minister Purbaya and Governor Dedi Mulyadi over regional fund management.

Comprehensive analysis of Indonesia's ambitious ethanol strategy - from E10 blending mandate to domestic production challenges, Brazil partnerships, and the path to energy sovereignty by 2027.

An in-depth analysis of Indonesia's Coretax tax system improvements, covering technical fixes, cybersecurity enhancements, performance upgrades, and strategic implications for reducing foreign dependencies.

Discover how API providers can drastically affect open-weight model performance, from benchmarks to tool calling accuracy.

Discover five powerful Claude skills that are transforming AI-assisted development in Claude Code.

A detailed chronological overview of the alleged Antam gold forgery case that shocked Indonesia in 2025 — including public discussions and alternative perspectives.

Discover how OpenSpec transforms software development by ensuring perfect alignment between humans and AI coding assistants before any code is written. A comprehensive guide to spec-driven development methodology.

Explore how GitHub Spec Kit revolutionizes software development by making specifications executable through AI-powered workflows. A comprehensive guide to specification-driven development with practical examples and implementation strategies.

Explore the differences between Retrieval Augmented Generation (RAG) and Cage Augmented Generation (CAG) for building large language model applications with external data sources.

Discover how to build sophisticated LLM applications using minimalist principles. A deep dive into PocketFlow's philosophy of simplicity over complexity in AI framework design.

Exploring the blurred lines between human and AI-generated music through the lens of parody and creative experimentation. Based on insights from a parody music creator's journey with AI tools.

Learn how to set up and use NVIDIA's revolutionary 3D generative AI blueprint that combines ComfyUI with Blender for creating stunning AI-textured 3D environments

Exploring the controversy around tracing in digital art, from the stigma against it to its potential as a learning tool and professional technique.

Learn how to go from zero knowledge to building functional features with any new technology using this proven framework. Based on real experience with the OpenAI Agent SDK.

A comprehensive prompt engineering cheatsheet drawing from IBM techniques and best practices to help you write better prompts and get more reliable AI results

Understanding the fundamental differences between fuzzy logic systems and neural networks, their histories, applications, and how they complement each other in modern AI systems

A comprehensive comparison of AI research priorities, government regulations, commercial applications, and training data strategies between the United States and China